- Blog

- Is my amazon order history private

- Download latest mysql for windows 7

- Free online pdf editor free download

- Monthly budget sample single person

- Sphax purebdcraft 1-7-10 download

- Will there be a kindergarten 2 game

- Pokemon diamond free download gba

- Personal budget spreadsheet excel template

- Baritone telecaster nate newton

- Free game mahjong spider solitaire

- Digital planner goodnotes free 2020

- Dallas cowboys roster 1992

- Pinochle game online

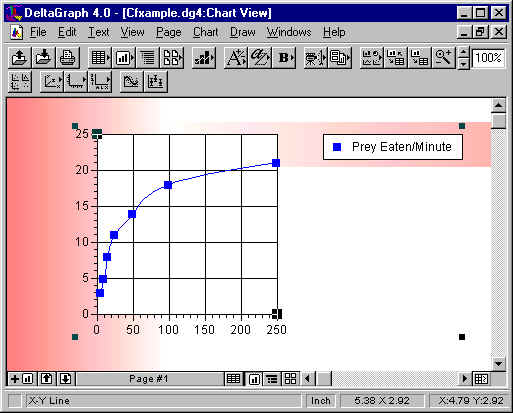

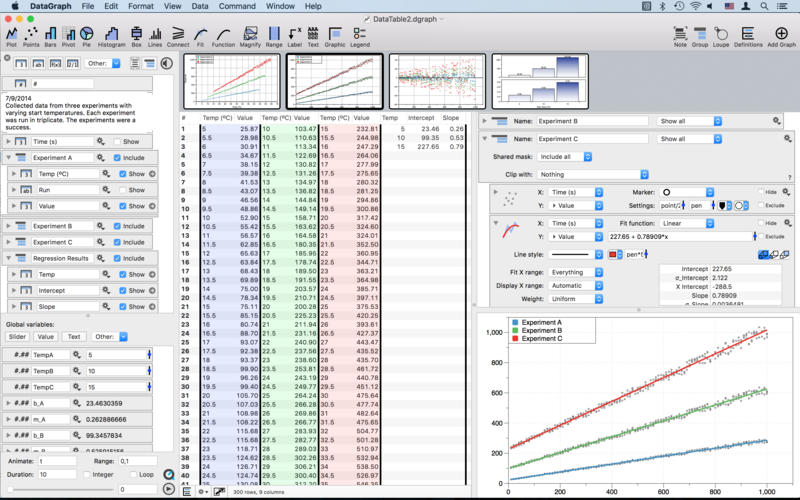

- Deltagraph 7 windows 10

- Will office 2007 install on windows 10

- Turn keep inventory on

- Minecraft potion of fire resistance

- Creative web camera drivers pd1110 download

- Bioshock 2 remastered pc trainer v1-0-122864

- Fable 2 pc download free deutsch

- Starfall calendar 2018

- Indesign real estate flyer designs

- 30 60 90 day plan example sales

- Coolingtech microscope driver windows 10

- Alphabet book with weird spelled words

- Full version happy wheels free

- Minecraft apk download 1-2-9

- Download wp database cpanel

- Adobe photoshop cc 2018 x64 crack

- I am playr 2-9

- Convert animated gif webp to gif

- Free monogram fonts for cricut maker

- Download media device for conexant audio driver

- Professional cover letter examples

- Play hearts card game online

- Download pgadmin 4 for windows

- Google sheets for household budget

The joined header and line items fact table makes use of stream( live.table_name) to only incrementally process new records in the upstream landing tables.

The following example shows how the orders landing table is created:Ĭreate or refresh streaming live table orders_landingĬomment "The landing orders dataset, ingested from /tmp/tahirfayyaz using auto loader."Īs select * from cloud_files("/tmp/tahirfayyaz/orders/", "json", map(Īll downstream tables can then refer to upstream tables using the stream( live.table_name) or live.table_name syntax. The landing tables make use of Auto Loader as the data source which allows you to only ingest new files landing in storage.

You can then clone the repo into your Databricks Workspace to get started in developing and deploying the DLT pipeline. If you are new to DLT you can follow the quick start tutorial to get familiar. To develop the DLT pipeline we have four Databricks notebooks structured in the following way to help you easily develop and share all of your ingestion, transformation and aggregation logic: In this guide, we will show you how to develop a Delta Live Tables pipeline to create, transform and update your Delta Lake tables and then build the matching data model in Power BI by connecting with a Databricks SQL endpoint.Īll the code for this demo is available in the Azure Databricks Essentials retail demo repo and it makes use of a TPCH dataset.

- Blog

- Is my amazon order history private

- Download latest mysql for windows 7

- Free online pdf editor free download

- Monthly budget sample single person

- Sphax purebdcraft 1-7-10 download

- Will there be a kindergarten 2 game

- Pokemon diamond free download gba

- Personal budget spreadsheet excel template

- Baritone telecaster nate newton

- Free game mahjong spider solitaire

- Digital planner goodnotes free 2020

- Dallas cowboys roster 1992

- Pinochle game online

- Deltagraph 7 windows 10

- Will office 2007 install on windows 10

- Turn keep inventory on

- Minecraft potion of fire resistance

- Creative web camera drivers pd1110 download

- Bioshock 2 remastered pc trainer v1-0-122864

- Fable 2 pc download free deutsch

- Starfall calendar 2018

- Indesign real estate flyer designs

- 30 60 90 day plan example sales

- Coolingtech microscope driver windows 10

- Alphabet book with weird spelled words

- Full version happy wheels free

- Minecraft apk download 1-2-9

- Download wp database cpanel

- Adobe photoshop cc 2018 x64 crack

- I am playr 2-9

- Convert animated gif webp to gif

- Free monogram fonts for cricut maker

- Download media device for conexant audio driver

- Professional cover letter examples

- Play hearts card game online

- Download pgadmin 4 for windows

- Google sheets for household budget